- 3 Minutes to read

- Print

- DarkLight

- PDF

Automatic Schema Drift Management

- 3 Minutes to read

- Print

- DarkLight

- PDF

Introduction

Schema drift refers to the gradual changes in the structure of data in a Source over time. These changes can have a significant impact on the integrity, and accuracy of data being replicated to a destination Target, such as a data warehouse.

Rivery provides automatd solutions for extracting, transforming, and loading data from various Sources into a Target. One of the key features is the ability to detect and handle schema changes in the Source.

Here's an Illustration of Schema Drift

Customers Table: Initial Structure

| ID | Name | Address | Phone Number |

|---|---|---|---|

| 1 | Jane | 500 7th Avenue | 5551235555 |

| 2 | John | 16 Great Chapel Street | 5554565556 |

Customers Table: After Schema Drift

| ID | Name | Address | Phone Number | Social Media Handle | |

|---|---|---|---|---|---|

| 1 | Jane | 500 7th Avenue | 555-123-5555 | Null | Null |

| 2 | John | 16 Great Chapel Street | 555-456-5556 | Null | Null |

| 3 | Bob | 14425 Falcon Head Blvd | (+1)555-789-5559 | bob.smith@rivery.com | @bobsmith |

The Customers database initially included four columns, as seen in this example: "ID," "Name," "Address," and "Phone Number." However, due to schema drift, the table now has two extra columns, "Email" and "Social Media Handle," and the "Phone Number" column's data type has been changed from integer to string.

Handling Schema Changes

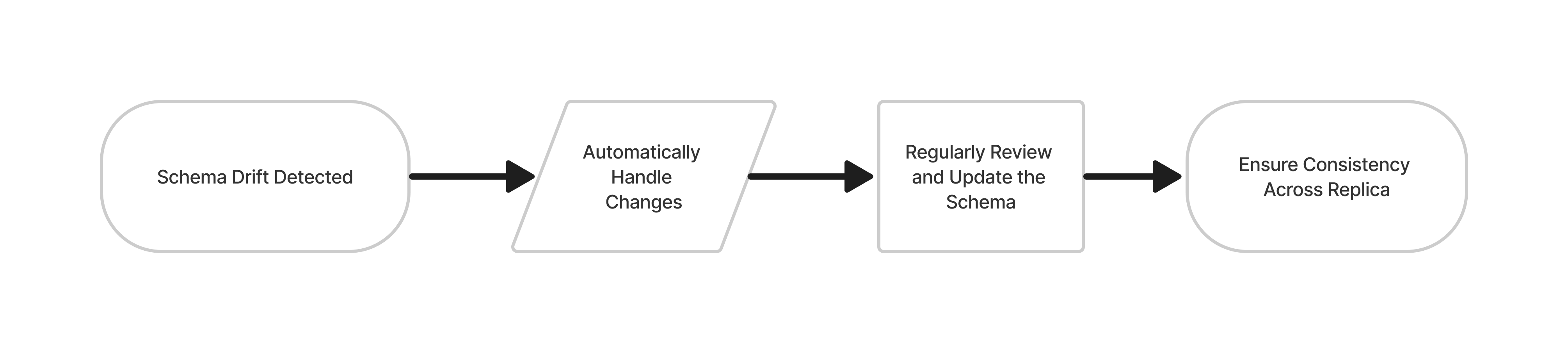

When a schema change is detected in the source, Rivery automatically updates the destination target's schema to match the new structure. This process happens without disrupting data flow or requiring manual intervention, whether through Standard extraction or Change Data Capture (CDC).

Rivery continuously compares the source's schema with its metadata—a record of the source's structure and attributes—to detect changes. On each run, Rivery resyncs the metadata with the source, ensuring they are always aligned. The metadata then serves as the foundation for data processing.

New Field Detection and Processing

When Rivery detects a new field in the source schema, such as 'ExcludeInMDM', it appears in the Rivery interface. However, for sources like SQL Server using Change Tracking, Rivery only updates the target schema (e.g., Snowflake) when processing new or modified data. This means the new field won't be reflected in Snowflake until it is included in new or updated data for the relevant table.

If the new field is created with a default value (e.g., 0), this action isn’t considered a data modification by Change Tracking. Therefore, Rivery does not recognize it as a change that would trigger a schema update in the target. The new field will remain 'pending' in Rivery’s schema until the river processes actual new or changed data. Running the river without such data will not trigger a schema change in the target, even if the process completes with warnings.

Column-Specific Schema Changes

Dropping a Column in the Source Table: If a column is dropped in the source but still exists in the target, the corresponding field in the target table will be populated with null values.

Renaming a Column in the Source Table: When a column is renamed in the source, Rivery detects the change during the next river run. This results in a new column being added to the target table with the updated name, while existing records are duplicated with null values in the new column. This approach maintains data integrity while accommodating schema changes.

Adding a Column in the Source Table: Upon detecting a new column in the source, Rivery adds it to the target table during the next river run. Existing records in the target table, predating the new column, will have null values in this new column.

Changing Data Type of an Existing Column: If the data type of a source column changes, Rivery adjusts the target column to a "wider" data type. For instance, changing a field from an integer to a string in the source will result in the target column being set to a string. However, if a field is changed from a string to a date, it will remain a string in the target.

Sources Supporting Schema Drift

Schema drift is available for the following Sources:

- BigQuery

- MariaDB

- Microsoft SQL Server

- MySQL

- Oracle

- PostgreSQL

- Redshift

- Salesforce

- Teradata

- Vertica

MongoDB Considerations

As a non-schema-based database, MongoDB operates differently from traditional databases. To simplify the mapping process when working with MongoDB collections, we offer 3 powerful features. For more details, please consult our documentation.